Notes on atmospheric perspective and distant mountains

I don't know if it's because I come from a supremely flat country, or in spite of it, but I love terrain with elevation differences. Seeing cliffs or mountains in the distance fills me with a special kind of calm. The game I'm currently working on, The Big Forest, is full of mountain forests too.

I've just returned from three weeks of vacation in Japan, and I had ample opportunities to admire and study views with layers upon layers of mountains in the distance. And while studying these views, something about the shades of mountains at different distances clicked for me that’s now obvious in retrospect. I'll get back to that.

Note: No photos here have any post-processing applied, apart from what light processing an iPhone 13 mini does out of the box with default settings. I often looked at the photos right after taking them, and they looked pretty faithful to what I could see with my own eyes.

The blue tint of atmospheric perspective

A beautiful thing about mountains in the far distance is how they appear as colored shapes behind each other in various shades of blue. Sometimes it looks distinctly like a watercolor painting.

In an art context, the blue tint that increases with distance is called aerial perspective or atmospheric perspective (Wikipedia).

I've tried to capture this in The Big Forest too by making things more blue tinted in the distance. In terms of 3D graphics techniques, I implemented it by using the simple fog feature which is built into Unity and most other engines. By setting the fog color to blue, everything fades towards blue in the distance. It can produce a more or less convincing aerial perspective effect. Using fog for this purpose is as old as the fog feature itself. The original OpenGL documentation mentions that the fog feature using the exponential mode "can be used to represent a number of atmospheric effects", implying it's not only for simulating fog. For our purposes, let's call it the fog trick.

Which color does things fade towards?

I long held a misconception that things in the distance (like mountains) get tinted towards whatever color the sky behind them has. In daytime when the sky is blue, the color of mountains approach the same blue color the further away they are. At sunset where the sky is red, the mountains approach that red color too. A hazy day where the sky is white? The mountains fade towards white too.

Of course, the sky is not a single color at a time. Even at its blueest, it's usually more pale at the horizon than straight above.

This raises a dilemma when using the fog trick. Set the fog color too close to the blue sky above, and the distant mountains appear unnatural near the pale horizon. But set the fog color to the pale color of the sky at the horizon, and the result is even worse: Some mountain peaks may then end up looking paler than the sky right behind them, and that looks very bad, since it never happens in reality.

For a long time I wished Unity had a way to fade towards the skybox color (the color of the sky at a given pixel) rather than a single fixed color.

In practice, it's not too difficult to settle on a compromise color which looks mostly fine. It's just still not ideal, for reasons that will become clear later.

Are more distant mountains more pale?

Now, while I was tweaking the fog color in my game and in general contemplating atmospheric perspective, I could see from certain reference photos I'd found on the Internet that mountains look paler at great distances. Not just paler than their native color – green if covered in trees – but also paler than the deep blue tint they appear with at less extreme distances.

This was counter-intuitive. How could the atmosphere tint things increasingly saturated blue up to a certain distance, but less saturated again beyond that point? Now, the thing is, you never know how random reference photos have been processed, and which filters might have been applied. For a while, I thought it simply came down to tone mapping.

Tone mapping is a technique used in digital photography and computer graphics to map very high contrasts observed in the real world (referred to as high dynamic range) into lower contrasts representable in a regular photograph or image (low dynamic range). For context, the sky can easily be a hundred times brighter than something on the ground that's in shadow. Our eyes are good at perceiving both despite the extreme difference in brightness, but a photograph or conventional digital image cannot represent one thing that's a hundred times brighter than another without losing most detail in one or the other.

If you try to take a picture with both sky and ground, the sky may appear white in the photo even though it looked blue to your eyes. Or if the sky appears as blue in the photo as it did to your eyes, then the ground may appear black. Tone mapping makes it possible to achieve a compromise: The ground can be legible while the sky also appears blue, but it's a paler blue in the photo than it appeared to your eyes. Tone mapping typically turns non-representable brightness into paleness instead.

So I thought: Distant mountains approach the color – and brightness – of the sky, so they may appear increasingly pale in photos simply because they're increasingly bright in reality, and the brightness gets turned into paleness by tone mapping.

However, while observing distant mountains with my own eyes on the Japan trip, it became clear that this theory just doesn't hold up.

Revised theory

Some of my thinking was partially true. Distant mountains do take on the color of the sky, just in a bit different way than I thought. And tone mapping does sometimes affect the paleness of the sky and distant mountains.

But on this trip I had ample opportunity to study mountains layered at many distances behind each other. I could observe with my own eyes (no tone mapping involved) that they do get paler with distance. (It's not that I've never seen mountains in the distance with my own eyes before, but on previous occasions I guess I didn't think very analytically about the exact shades.) Furthermore I've taken a lot of pictures of it, where (unlike random pictures I find on the Internet) I've verified that the colors and shades look about the same in the pictures as they looked to my eyes in real life.

So here's what finally clicked for me:

Mountains transition from a deep blue tint in the mid-distance to a paler tint in the far distance for the same reason that the sky is paler near the horizon.

To the best of my current understanding, the complex scientific reason relates to how Rayleigh scattering (Wikipedia) and possibly Mie scattering (Wikipedia) interact with sunlight and the human visual system, but the end result is this:

As you look through an increasing distance of air (in daytime), the appearance of the air changes from transparent, to blue, to nearly white. (Presumably this goes through a curved trajectory in color space).

- When you look at the sky, there's more air to look through near the horizon than when looking straight up, so the horizon is paler.

- Similarly, there's also more air to look through when looking at a more distant mountain compared to a less distant one, so the more distant one is paler.

A small corollary to this is that the atmospheric tint of a mountain can only ever be less pale than the sky immediately behind it, since you're always looking through a greater distance of air when looking just past the mountain than when looking directly at it.

This can be generalized, so it doesn't only work at daytime, but for sunsets too: Closer mountains are tinted similar to the sky further up, while more distant mountains are tinted similar to the sky nearer the horizon. In practice though, it's hard to find photos showing red-tinted mountains; much more common are blue-tinted mountains flush against the red horizon. Possibly the shadows from the mountains at sunset play a role, or perhaps the distance required for a red tint is so large that mountains are almost never far enough away.

I sort of knew the part about the horizon being paler due to looking through more air, but for some reason hadn't connected it to mountains at different distances. In retrospect it's obvious to me, and I'm sure lots of the readership of this blog were well aware of it, and find it amusing that I only found out about it now. On the other hand, I can also see why it eluded me for a long time:

- It's just not intuitive that a single effect fades things towards one color or another depending on the magnitude.

- It's hard to find good and reliable reference photos, and unclear how to interpret them given the existence of filters and tone mapping.

- The Wikipedia page on aerial perspective doesn't mention that the color goes from deeper blue to paler blue with distance. You could read the entire page and just come away with the same idea I had, that aerial perspective simply fades towards one color.

- If you go deeper and read the Wikipedia pages on Rayleigh scattering and Mie scattering, they don't mention it either. The one on Rayleigh scattering has a section about "Cause of the blue color of the sky", but it doesn't mention anything about the horizon being paler.

In fact, I've not yet found any resource that is explicit about the fact that the color of increasingly distant mountains go from deeper blue to paler blue. It's even hard to find any references that explain why the sky is paler near the horizon, and the random obscure Reddit and Stack Exchange posts I did find did not agree on whether the paleness of the horizon is due to Rayleigh scattering or to Mie scattering.

I found and tinkered with this Shadertoy, and if that's anything to go by, the pale horizon comes from Rayleigh scattering, while Mie scattering primarily produces a halo around the sun. I don't know how to add mountains to it though.

All right, that was a lot of text. Here's another nice photo to look at:

I'm still not really certain of much, and you should take my conclusions with a grain of salt. I haven't yet found any definitive validation of my theory that mountains are paler with distance for the same reason the horizon is paler; it's just my best explanation based on my observations so far. I find it somewhat strange that it's so difficult to find good and straightforward information on this topic (at least for people who are not expert graphics programmers or academics), but perhaps some knowledgeable readers of this post can shed additional light on things.

One thing is pretty clear: An accurate rendition of atmospheric perspective (at great distances) cannot be achieved in games and other computer graphics by using the fog trick, or other approaches that fade towards a single color. I haven't yet researched alternatives much, but I'm sure there must be a variety of off-the-shelf solutions for Unity and other engines. I've learned that Unreal has a powerful and versatile Sky Atmosphere Component built-in, while Unity's HD render pipeline has a Physically Based Sky feature, which however seems problematic according to various forum threads. If you have experience with any atmospheric scattering solutions, feel free to tell about your experience in the comments below.

It's also worth noting though that the distances at which mountains fade from the deepest blue to paler blue colors can be quite extreme, and may not be relevant at all for a lot of games. Plenty of games have shipped and looked great using the fog trick, despite its limitations.

Light and shadow

Let's finally move on from the subject of paleness, and look at how light and shadow interacts with atmospheric perspective.

Here are two pictures of the same mountains (the big one is the volcano Mount Iwate) from almost the same angle, at two different times. In the first, where the mountain sides facing the camera are in shadow, the mountains appear as flat colors. In the second you can see spots of snow and other details on the volcano, lit by the sun. The color of the atmosphere is also a deeper blue in the second picture, probably due to being closer to midday.

And here's a picture from Yama-dera (Risshaku-ji temple), where the partial cloud cover lets us see mountains in both sunlight and shadow simultaneously. This makes it very clear that mountain sides at the same distance appear blue when in shadow and green when in light. The blue color of the atmosphere is of course still there in the sunlit parts of the surface, but it's owerpowered by the stronger green light from the sunlit trees.

That's all the observations on atmospheric perspective I made for now. I would love to hear your thoughts and insights! If you'd like to see more inspiring photos from my Japan trip (for example from a mystical forest stairway), I wrote another post about that.

Resources for further study

Here are links to some resources I and others have come across while looking into this topic.

From my perspective, these resources are mostly to get a better understanding of the subject, and the theoretical possibilities. In practice, it's not straightforward to implement one's own atmospheric scattering solution in an existing engine. Even in cases where the math itself is simple enough, the graphics pipeline plumbing required to make the effect apply to all materials (opaque and transparent) is often non-trivial or outright prohibitive for people like me, who aren't expert graphics programmers.

- A simple improvement upon single-color fog is to use different exponents for the red, green, and blue channel. This can be used to have the tint of the atmosphere shift from blue to white with distance. There's example shader code for it in this post by Inigo Quilez, though unfortunately it lacks images illustrating the effect. The post also covers how to fade towards a different color near the sun, and other effects.

- Here's a 2020 academic paper, video and code repository for the atmospheric rendering in Unreal, and here's the documentation.

- Here's the documentation for Unity's Physically Based Sky.

- A 2008 paper that gets referenced a lot is Precomputed Atmospheric Scattering by Bruneton and Neyret, with code repository here. Unity's solution is based on it, and it's cited and compared in Unreal's paper.

Creaking Gorge and The Cauldron

Since my last post in July where I finally got a vision down for the level design in Eye of the Temple, I've been feeling super productive adding new areas and features to the game.

In August I added two new areas and in September I've been revamping the in-game UI and the speedrun mode. Only problem is I haven't kept up with these blog posts. To avoid this post getting too long, I'll cover the new areas here and save the UI work for a later post.

Creaking Gorge

Creaking Gorge is an area where you move along and into cliff sides and atop wooden scaffolding. It's by far the most vertical area in the game, spanning more than 50 meters vertically.

The quest for automatic smooth edges for 3d models

I'm currently learning simple 3D modeling so I can make some models for my game. I'm using Blender for modeling.

The models I need to make are fairly simple shapes depicting man-made objects made of stone and metal (though until I get it textured it will look more like plastic). There are a lot of flat surfaces.

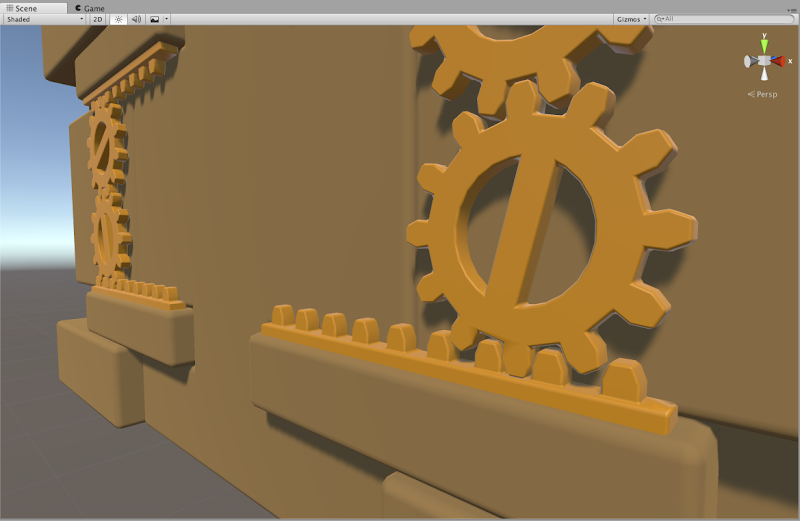

The end result I want is these simple shapes with flat surfaces - and smooth edges. In the real world, almost no objects have completely sharp edges, and so 3d models without smooth edges tend to look like they're made of paper, like this:

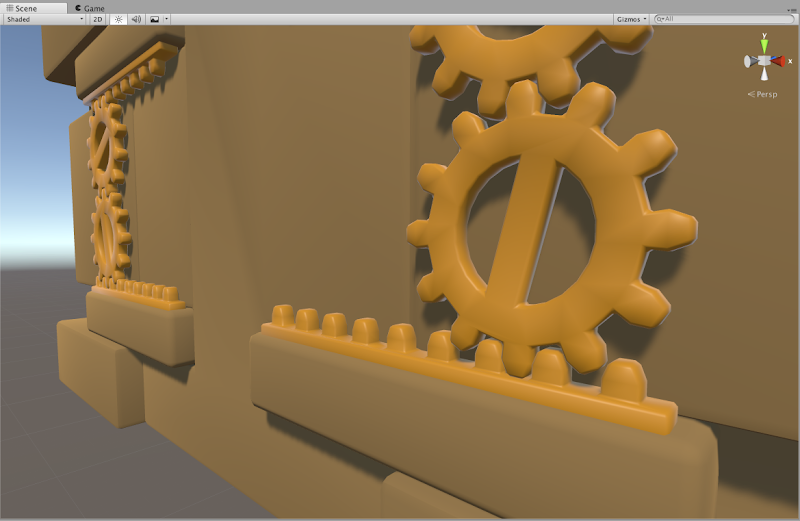

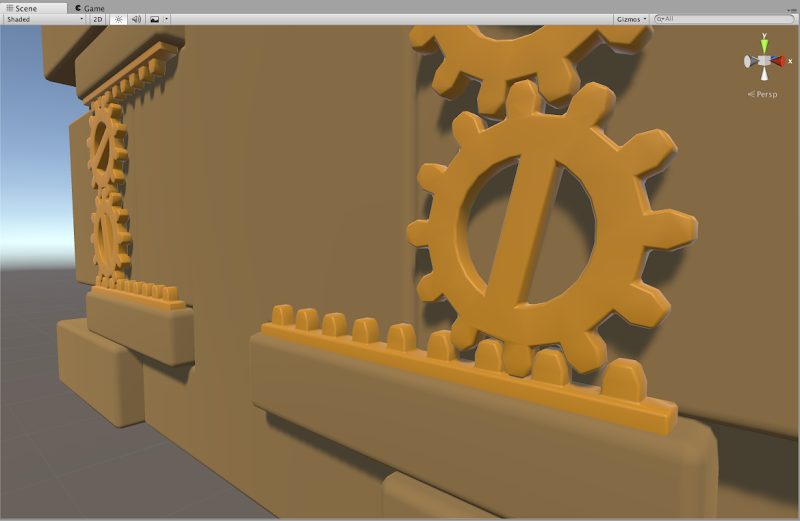

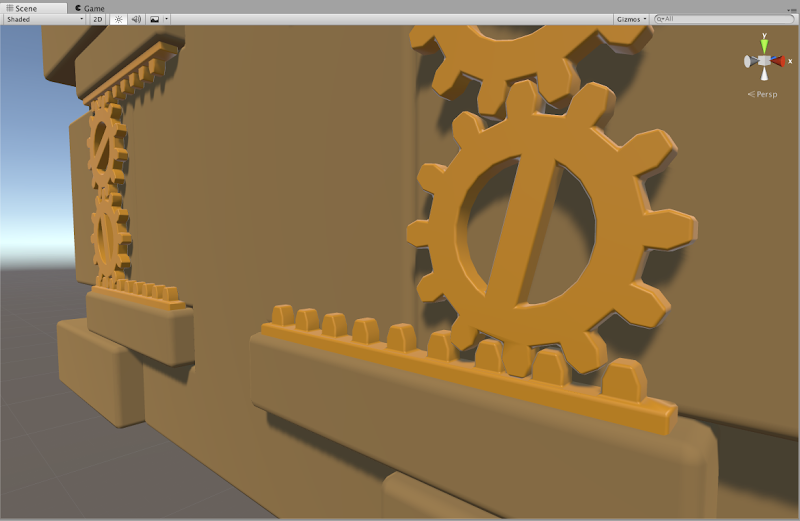

What I want instead is the same shapes but with smooth edges like this:

Here, some edges are very rounded, while others have just a little bit of smoothness in order to not look like paper. No edges here are actually completely sharp.

The two images above shows the end result I wanted. It turns out it was much harder to get there than I had expected! Here's the journey of how I got there.

How are smooth edges normally obtained? By a variety of methods. The Blender documentation page on the subject is a bit confusing, talking about many different things without clear separation and with inconsistent use of images.

Edge loops plus subdivision surface modifier

From my research I have gathered that a typical approach is to add edge loops near edges that should be smooth, and then use a Subdivision Surface modifier on the object. This is also mentioned on the documentation page above. This has several problems.

First of all, subdivision creates a lot of polygons which is not great for game use.

Second, adding edge loops is a manual process, and I'm looking for a fully automatic solution. It's important for me to have quick iteration times. To be able to fundamentally change the shape and then shortly after see the updated end result inside the game. For this reason I strongly prefer a non-destructive editing workflow. This means the that the parts that make up the model are kept as separate pieces and not "baked" into one model such that they can no longer be separated or manipulated individually.

Adding edge loops means adding a lot of complexity to the model just for the sake of getting smooth edges, which then makes the shape more cumbersome to make major changes to afterwards. Additionally, edge loops can't be added around edges resulting from procedures such as boolean subtraction (carving one object out of another) and similar, at least not without baking/applying the procedure, which is a destructive editing operation.

Edge loops and subdivision is not the way to go then.

Bevel modifier

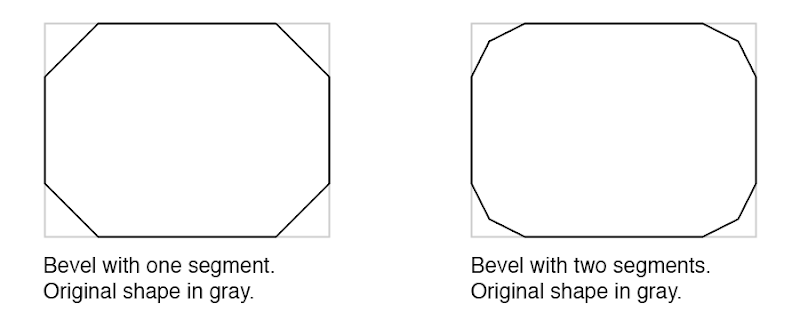

Some posts on the web suggests using a Bevel modifier on the object. This modifier can automatically add bevels of a specified thickness for all edges (or selectively if desired). The Bevel modifier in Blender does what I want in the sense that it's fully automatic and creates sensible geometry without superfluous polygons.

However, by itself the bevel either requires a lot of segments, which is not efficient for use in games (I'd want one to two segments only to keep the poly count low) or when fewer segments are used it creates a segmented look rather than smooth edges, as it can also be seen below.

Baking high-poly details into normal maps of low-poly object

Another common approach, especially for games, is to create both a high-poly and a low-poly version of the object. The high-poly one can have all the detail you want, so for example a bevel effect with tons of segments. The low-poly one is kept simple but has the appearance from the high-poly one baked into its normal maps.

This is of course a proven approach for game use, but it seems overly complicated to me for the simple things I want to achieve. Though I haven't tried it out in practice, I suspect it doesn't play well with a non-destructive workflow, and that it adds a lot of overhead and thus reduces iteration time.

Bevel and smooth shading

Going back to the bevel approach, what I really want is the geometry created by the Bevel modifier but with smooth shading. The problem is that smooth shading also makes the original flat surfaces appear curved.

Here is my model with bevel and smooth shading. The edges are smooth sure enough, but all the surfaces that were supposed to be flat are curvy too.

Smooth shading works by pretending the surface at each point is facing in a different direction than it actually does. For a given polygon, the faked direction is defined at each of its corners in the form of a normal. A normal is a vector that points out perpendicular to the surface. Only, we can modify normals to point in other directions for our faking purposes.

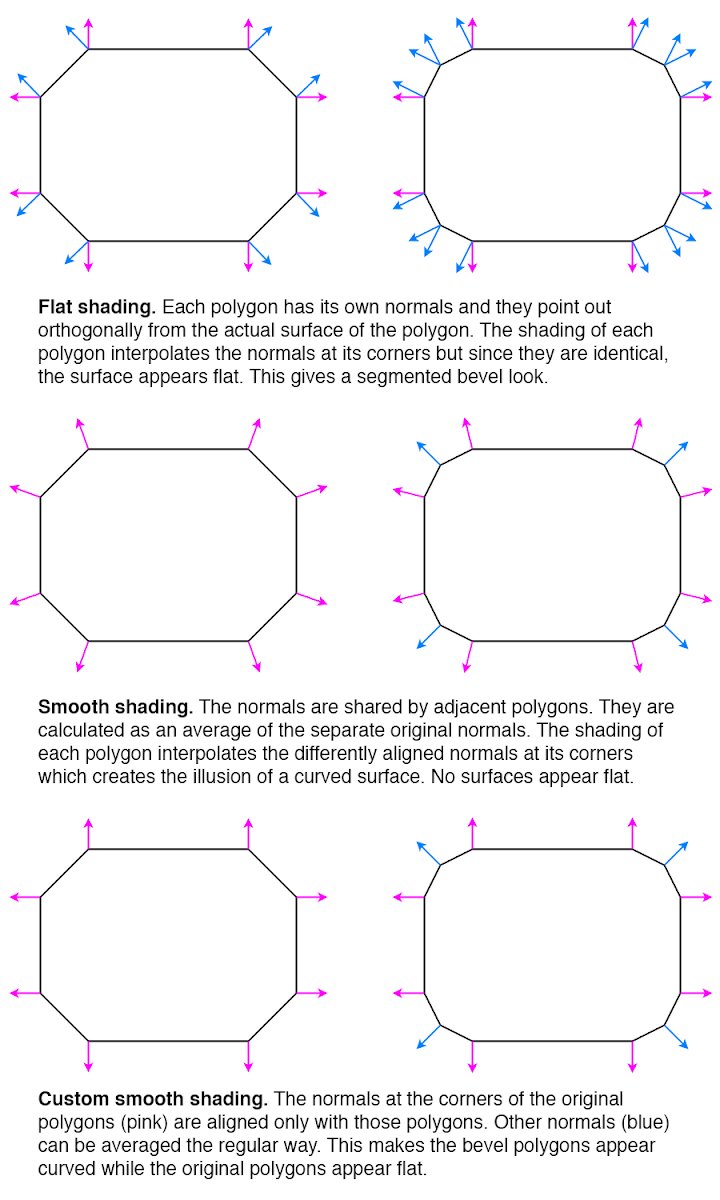

The way that smooth shading typically calculates normals makes all the surfaces appear curved. (There is typically a way to selectively make some surfaces flat, but then they will have sharp edges too.) The diagram below shows the normals for flat shading, for typical smooth shading, and for a third way that is what I would need for my smooth edges.

So how can the third way be achieved? I found a post that asks the same question essentially. The answers there don't really help. One incorrectly concludes that Blender's Auto Smooth feature gives the desired result - it actually doesn't but the lighting in the posted image is too poor to make it obvious. The other is the usual edge loop suggestion.

When I posted question myself requesting clarification on the issue, I was pointed to a Blender add-on called Blend4Web. It has a Normal Editing feature with a Face button that seems to be able to align the normals in the desired way - however as a manual workflow, not an automated process. I also found other forum threads discussing the technique.

Using a better smoothing technique

At this point I got the impression there was no way to get the smooth edges I wanted in an automated way inside of Blender, at least without changing the source code or writing my own add-on. Instead I considered an alternative strategy: Since I ultimately use the models in Unity, maybe I could fix the issue there instead.

In Unity I have no way of knowing which polygons are part of bevels and which ones are part of the original surfaces. But it's possible to take advantage of the fact that bevel polygons are usually much smaller.

There is a common technique called face weighted normals / area weighted normals (explained here) for calculating averaged smooth normals which is to weigh the contributing normals according to the surface areas of the faces (polygons) they belong to. This means that the curvature will be distributed mostly on small polygons, while larger polygons will be more flat (but still slightly curved).

From the discussions I've seen, there is general consensus that this usually produces better results than a simple average (here's one random thread about it). It sounds like Maya uses this technique by default since at least 2014, but smooth shading in Blender doesn't use it or support it (even though people have discussed it and made custom add-ons for it back in 2008), nor does the model importer in Unity (when it's set to recalculate normals).

Custom smoothing in Unity AssetPostprocessor

In Unity it's possible to write AssetPostprocessors that can modify imported objects as part of the import process. This can also be used for modifying an imported mesh. I figured I could use this to calculate the smooth normals in an alternative way that produces the results I want.

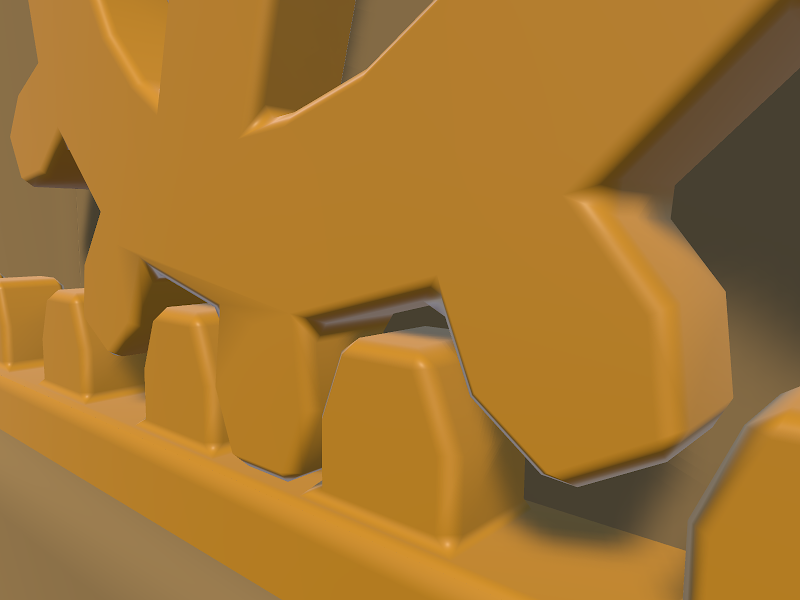

I started by implementing just area weighted normals. This technique still make the large faces slightly curved. Here is the result.

Honestly, the slight curvature on the large faces can be hard to spot here. Still, I figured I could improve upon it.

I also implemented a feature to let weights smaller than a certain threshold be ignored. For each averaged normal, all the contributing normals are collected in a set, and the largest weight is noted. Any weight smaller than a certain percentage of the largest weight can then be ignored and not included in the average. For my geometry, this worked very well and removed the remaining curvature from the large faces. Here is the final result again.

The code is available here as a GitHub Gist. Part of the code is derived from code by Charis Marangos, aka Zoodinger.

Future perspectives

The technique of aligning smooth normals on beveled models with the original (pre-bevel) faces seems to be well understood when you dig a bit, but poorly supported in software. I hope Blender and other 3D software one day will have a "smooth" option for their Bevel modifier which retains the outer-most normal undisturbed.

A simpler prospect is adding support for area weighted normals. This produces almost as good result for smooth edges, and is a much more widely applicable technique, not specific to bevels or smooth edges at all. That Blender, Unity and other 3D software that support calculating smooth normals do not include this as an option is even more mind-boggling, particularly given how trivial is it to implement. Luckily there workarounds for it in the form of AssetPostprocessors for Unity and custom add-ons for Blender.

If you do 3D modeling, how do you normally handle smooth edges? Are you happy with the workflows? Do some 3D software have great (automatic!) support for it out of the box?

The Big Forest

I've been continuing my work on the procedural terrain project I wrote about here. I added grass, trees and footstep sounds (crucial!) and it's beginning to really come together as a nice forest to spend some time in.

I made a video of it here. Enjoy!

If you want to learn more about it, have a look at this thread on the procedural generation subreddit.

The Cluster 2015 Retrospective

The Cluster is an exploration game I've been developing in my spare time for some time. You can see all posts about it here. It looks like I didn't write any posts about it for all of 2015, yet I've been far from idle.

By the end of 2014 I had done some ground work for fleshing out the structure of the world regions, but the game still didn't provide visible purpose and direction for the player.

My goal for 2015 was to get The Cluster in a state where it worked as a real game and I could hand it over to people to play it without needing instructions from me. Did The Cluster reach this goal in 2015? Yes and no.

I made a big to-do list with all the items needed to be done for this to work. (As always, the list was revised continuously.) I did manage to implement all these things so that the game in theory should be meaningfully playable. I consider that in itself a success and big milestone.

However, I performed a few play tests in the fall, and it revealed some issues. This was not really unexpected. I've developed games and play tested them before, and it always reveals issues and shows that things that were designed to be clearly understandable are not necessarily so. I don't consider this a failure as such - when I decided on my goal for 2015 I didn't make room for extensive iteration based on play test findings. I did manage to address some of the issues already - others will need to be addressed in 2016.

On the plus side, several players I had playing the game had a good time with it once they got into it with a little bit of help from me. In two instances they continued playing for much longer than I would have expected, and in one instance a play-tester completed clearing an entire region, which takes several hours. I think only a minority of players can get that engaged with the game in its current state, but it was still highly encouraging to see.

Essentials

Some boring but important stuff just had to be done. A main menu. A pause menu. Fading to black during loading and showing a progress bar. (I found out that estimating progress for procedural generation can be surprisingly tricky and involved. I now have a lot more understanding for unreliable progress bars in general.) Also, upgrading to Unity 5 and fixing some shaders etc.

Enemy combat

I had AI path-finding working long ago, but never wrapped up the AIs into fully functional enemies. In 2015 I implemented enemy bases in the world to give the enemies a place to spawn from and patrol around.

Enemy combat also entailed implementing health systems for player and enemies (with time-based healing for the player), implementing player death and reloading of state, and having the enemies be destroyed when the player enters certain safe zones.

For the combat I decided to return to a combat approach I used long ago where both player and enemies can hold only one piece of ammo at a time (a firestone). Once thrown, player or enemies have to look for a new firestone to pick up before they can attack again. This facilitates a gameplay alternating between attacking and evading. I noticed that the game Feist uses a similar approach (though the old version of my game that used this approach is much older than Feist).

I decided to begin to use behaviour trees for the high-level control of enemies. This included patrolling between points by default, spotting the player on sight, pursuing the player, but look for firestones on the ground to use as ammo if not already carrying one. Then returning to patrolling if having lost sight of the player for too long. Even AI logic as simple as this turned out to have quite some complexities and edge cases to handle.

Conveying the world structure

The other big task on my list after enemy combat was making the world structure comprehensible and functional to the player.

Worlds in The Cluster are divided into large regions. One region has a central village and multiple shrines. All of those function as safe zones that instantly destroys enemies when entered and saves the progress. In addition, a region has multiple artefact locations that are initially unknown and must be found and activated by the player. This basic structure was already in place by the end of 2014, but not yet communicated to the player in any way.

I've done my share of game design but I'm still not super experienced as a game designer. It took a lot of pondering and iteration to figure out how to effectively communicate everything that's needed to the player, and even then it's still far from perfect. In the end I've used several different ways to communicate the world structure that work in conjunction:

- Supporting it through the game mechanics.

- In-world as part of how the world looks.

- In meta communication, such as a map screen.

- Through text explanations / dialogue.

Supporting world structure through game mechanics

There are a number of game mechanics that are designed to support the world structure.

The artefacts that are hidden around the region can be discovered by chance by exploring randomly, but this can take quite a while and requires self-direction and determination that not all players have. To provide more of a direction, I introduced a mechanic that the shrines can reveal the approximate location of the nearest undiscovered artefact. This gives the player a smaller area to go towards and then search within.

In order to sustain most of the mystery for as long as possible, a new approximate artefact location can't be revealed until the existing one has been found. This also helps giving the player a single clear goal, they are still free to explore elsewhere if desired.

Once an artefact is found, a shortcut in the form of a travel tube can be used to quickly get back to a more central place in the region. Initially the tube exit would be close to a shrine, but the player might subsequently miss the shrine and be aimless about where to go next. Based on early play tests, I changed the tubes to lead directly back to a shrine. This way the player can immediately choose to have a new approximate artefact location revealed.

World structure communicated in-world

I got the idea to create in-world road signs that point towards nearby locations in the region, such as the village and the various shrines. This both concretely provides directions for the player and increases immersion.

Particularly for a procedurally generated world, the signage can also help reinforce the notion that there is structure and reason to the world as opposed to it being entirely random as can be a preconception about procedurally generated worlds.

This entailed generating names for the locations and figuring out which structures to store them in. The signs can point to locations which are far outside the range of the world that is currently loaded at the max planning level. As such, the names of locations need to be generated as part of the overall region planning rather than as part of the more detailed but shorter range planning of individual places.

Next, I needed to make key locations look their part. I'm not a modeller, but I created some simple placeholder models and structures which at least can give the idea of a village and shrines.

Improved map screen

I had created a detailed map for the game long ago, but that didn't effectively communicate the larger overall structure of a region.

To remedy this I created a new map that shows the region structure. I've gone a bit back and forth between how the two maps integrate, but eventually I've concluded that combining them in one view produces too much confusing simultaneous information, so they are now mostly separate, with the map screen transitioning between the two as the player zooms in or out.

Here's examples of the detail-map and the region-map:

Apart from the map itself, I also added icons to the map to indicate the various locations as well as the position of the player. Certain locations in the game can be known but not yet discovered. This mean the approximate location is known but not the exact position. These locations are marked with a question-mark in the icon and a dotted circle around it to indicate the area in which to search for the location.

Part of the work was also to keep track of discovered locations in the save system.

Dialogue system

Communicating structure and purpose through in-world signage and the map screen was not sufficient, so I started implementing a dialogue system in order to let characters in the game be able to explain things.

This too proved to be quite involved. Besides the system to just display text on screen in a nice way, there also needed to be a whole supporting system for controlling which dialogues should be shown where, depending on which kind of world state.

This can be complex enough for a manually designed game. For a procedural game, it's an additional concern how to design the code to place one-off dialogue triggers in among procedural algorithms that are used to generate hundreds of different places, without the code becoming cluttered in undesirable ways.

What's next?

I hope to get The Cluster into a state where it's fully playable without any instructions in the first quarter of 2016.

After that I want to expand on the gameplay to make it more engaging and more varied.

As part of that I anticipate that I may need to revert the graphics in the game to a simpler look for a while. I've had a certain satisfaction from developing the gameplay and graphics of the game in parallel, since having something nice to look at is very satisfying to accomplish. However, now that I'll need to ramp up rapid development of more gameplay elements, having to make new gameplay gizmos match the same level of graphics will slow down the iteration process. For that reason I'll probably make the game have more of a prototype look for a while, where I can develop new gameplay with little or no time spent on graphics and looks.

Nevertheless, even with a much simpler look, I still want to retain some level of atmosphere, since one of the things I want to implement is more variety in moods. This is in extension to the game jam project A Study in Composition I worked on this year.

If you are interested in being a play tester for early builds of The Cluster, let me know. I can't say when I will start the next round of play testing, but I'm building up a list of people I can contact once the time is right. Play testing may involve talking and screen-sharing over e.g. Skype since I'll need to be able to observe the play session.

If you want to follow the development of the Cluster you can follow The Cluster on Twitter or follow myself.

A Study in Composition

Two weeks ago I participated in Exile Game Jam - a cosy jam located remotely an hour's drive outside of Copenhagen. There was a suggested theme of "non-game" this year.

This was partially overlapping with the online Procedural Generation Jam (#procjam) which ran throughout last week with the simple theme of "Make something that makes something".

I wanted to make a combined entry for both jams. My idea was to create procedural landscapes with a focus on evoking a wide variety of moods with simple means. I formed a team with Morten Nobel-Jørgensen and got an offer to help with soundscapes from Andreas Frostholm, and we got to work.

You can download the final result here: A Study in Composition at itch.io. You can also watch a video of it here:

Furthermore we decided to make the source code open source under the MIT license. You can see and download the Unity project folder at GitHub.

Motivation

The motivation of the project was primarily to learn about how to create evocative and striking landscapes with simple means, particularly by creating harmonic and expressive color palettes. The name "A Study in Composition" is meant to convey this in a similar sense as it's used in classical art.

Making the demo

Each scene consists of just a flat plane and a distribution of trees, all of it with simple colors without textures. Additionally there is a light source, variable fog amount, and sometimes a star-field. The trees are procedurally generated using L-systems and are distributed in many different ways using multiple noise functions.

Tree generation

Morten had created procedural trees with L-systems for previous work that we could make use of in this project. This was a huge head-start. During the project he worked on improvements such as support for leaves, a simple wind effect, and improvements to the algorithm.

Distribution of trees

We use a continuous noise function to distribute the trees. The function is evaluated twice - once at low frequency and once at higher frequency - and the values (between 0 and 1) are multiplied together. Simply put, this creates large clumps consisting of smaller clumps.

The resulting function is still between 0 and 1. For each position in a grid, we evaluate the function. The the function value is greater than a certain threshold, we place a tree.

The threshold value is different from scene to scene. We also add a random value to the threshold for each tree placement to make the edges of the clumps of trees more fuzzy. This randomness amount is also different from scene to scene. The result can create anything from dense forests to sparse savannas, and within a single scene, trees are not uniformly placed but clumps nicely in groups.

Color palettes

An important element of evoking different moods despite the simple means is in the color selection. First an initial color is chosen. This is done is HSV color space, where hue, saturation, and value are all values between 0 and 1. (The Value in HSV means brightness; not to be confused with lightness.)

A palette is created from the initial color by creating either a pair of complementary colors from it, or a color triad. The initial color determines the saturation and value of all the colors in the palette. This is a simple way to make the palette look consistent and harmonic.

Some extra color variations are created, and each scene element is then assigned a color from this palette. Each element knows its "normal" color and will attempt to choose a color from the palette similar to that. This will often result in natural landscapes with green grass, blue sky, brown branches, and green to red leaves. Sometimes though, nothing close to those colors will be available in the palette, and the result may be more surrealistic.

One thing I found during development was that palettes with low value (brightness) and high saturation always seemed to look bad. While I don't know for sure, my theory is that it's related to night vision. In our demo, a dark palette makes everything darker, including the sky, so it's synonymous with a darker light level, meaning dusk, overcast, or night time environments. In low-light environments, the color vision ability of the human eye becomes less effective, and the night vision ability - which is in gray-scale only - plays a larger role. So I think there's an expectation that low light environments don't have saturated colors, since our color vision is mostly out of play.

In any case, to avoid the unpleasant looking saturated dark colors, we simply multiplied the value (brightness) onto the saturation.

Soundscapes

Two thirds into the development, we showed the demo to Andreas who were making sounds for other jam projects too. In a short amount of time he managed to bang out soundscapes that added a lot of atmosphere to the demo while having zero constraints on how they should be played. The multiple pieces of sound files had different length but were each either non-rhythmical or only sporadically rhythmic, and they could be played on top of each other randomly and still sound good. The result is not always harmonic, but it intentionally uses the disharmony to create hypnotic soundscapes that interweaves between beautiful calm and eerie.

The sound files sounded fine all just playing simultaneously at the same value, but I added some extra variety by randomly adjusting the volume levels.

Cinematography

During development of the demo, I got tired of walking around manually using a first-person view, and pressing a button to change the environment. It seemed unnecessary to what we were doing, so we decided to make the camera movement and scene changes automatic instead. Non-games were encouraged and fully accepted in the two jams respectively anyway.

For camera movement we initially had a camera zooming fast by the trees, but following a tip from Tim Garbos to slow it down a lot made the scenes come much more to their right. Late in the process we settled on some variations of camera movement: Successive shots would vary between moving the camera forward or panning sideways left or right. It would also vary between being position at eye height (most common) or above the trees for a grander overview (more seldom).

We experimented with different ways of fading between shots. A cross-fade was impractical due to the need to have two scenes active at the same time, but we tried fading to black or white. Frequent fading detracted from the experience though. In the end we used no-frill cuts, but had every third cut be bridged by a dramatic cut to black inspired by the opening to Vanilla Sky. I joked that we should win the award for pretentiousness if the jam had one.

Some tweaks were made after the Exile Jam was over, while ProcJam was still running. I made the groups of three scenes in between black cuts thematically coherent by keeping certain variables constant between them. While most variables are randomized in every new scene, the palette saturation and value, the fog amount, and the camera movement mode is only changed when a "black cut" happens. This lets you experience small variations of a theme with the black cuts resetting the senses in between changes to new themes.

Future work

While there is a lot of ways the demo could be expanded and improved, we don't have any future work planned for this demo in itself. For me, I'm going to use what I've learned about creating variety in environments for my own other procedural projects.

We've also made the source code for this demo available and if you do anything with it, we'd love to hear about it!

The Cluster 2014 Retrospective

The development of my exploration game The Cluster, that I'm creating in my spare time, marches ever onwards, and 2014 saw some nice improvements to it.

Let's have a look back in the form of embedded tweets from throughout the year with added explanations.

Worlds and world structure

During the end of 2013 I had implemented the concept of huge worlds in the game, and I spent a good part of 2014 beginning to add more structure and purpose to these worlds.

Each world is divided into regions with pathways connecting artefacts, connections to neighbor regions, and the region hub. I used a minimum spanning tree algorithm to find a nice way to determine nicely balanced connections, and tweaking the weights used for the connections to change the overall feel of the structure is always fun.

New name

In the early 2D iteration of my game, it was called Cavex, because it had caves and weird spelling was the thing to do to make names unique. Then since 2010 I used the code name EaS after the still undisclosed names of the protagonists, E. and S. This year I decided to name the game The Cluster as a reference to the place where the game takes place.

Spherical atmosphere

The game takes place on large (though not planet-sized) floating worlds. Skyboxes with fixed sky gradients don't fit well with this, but finding a good alternative is tricky. I finally managed to produce shaders that create the effect of spherical fog (and atmosphere is like very thin fog), which elegantly solved the problem.

The atmosphere now looks correct (meaning nice, not physically correct) both when inside and when moving outside of it. The shader was a combination of existing work from the community and some extensive tweaks and changes by myself. I've posted my spherical fog shader here.

Integrating large world structures with terrain

The game world in The Cluster is consists of a large grid of areas, though you wouldn't be able to tell where the cell borders are because it's completely seamless. Still, the background hills used to be controlled by each area individually based solely on whether each of the four corners where inside or outside of the ground. I changed this to be more tightly integrated with the overall shape of the world and some large-scale noise functions.

The new overall shapes of the hills have greater variety and also fit the shape of the world better.

I also re-enabled features of the terrain I had implemented years ago but which had gotten lost in some refactoring at some point. Namely, I have perturbation functions that take a regular smooth noise function and makes it more blocky. I prefer this embracing of block shapes over the smooth but pixelated look of e.g. Minecraft.

Tubes

As part of creating more structure in the regions, I realized the need to reduce boring backtracking after having reached a remote goal. I implemented tubes that can quickly transport the player back. I may use them for other purposes too. Unlike tubes (pipes) in Mario, these tubes are 100% connected in-world, so are not just magic teleports in tube form.

What's next?

It's not a secret that most aspects of The Cluster gameplay are only loosely defined despite all good advise of making sure to "find the fun" as early as possible. Being able to ignore sound advice is one of the benefits of a spare time project!

However, after the ground work for fleshing out the structure of the world regions this year, I've gotten some more concrete ideas for how the core of the game is going to function. I won't reveal more here, but stay tuned!

Two new images in graphics gallery

I've added two new images to the Computer Graphics Gallery. One is a very simplistic image with an anti-slogan. The other is a drawing I've made. Yes, a drawing for once!

New Computer Graphics Gallery

I've put up a new Computer Graphics Gallery. It's not very big yet, but some of the images there have a kind of personal touch not found in my 3D Graphics Gallery. Go have a look. :)

Update: The Computer Graphics Gallery has later been merged with the 3D Graphics Gallery.

New Outside Gallery

I've put up an Outside Gallery. It's a gallery featuring 3d images made by other people, but where a tiny little bit of influence from me can be found.

New site name "Rune's World" and new 3D image

"The RSJ Website" has changed name to Rune's World, but the address remains the same. The design is improved too. What do you think? I've also added a funny new image called "No More Chrome Spheres Please!" to the 3D Gallery.